A machine vision sensor inspired by how we see things

Other Articles

Machine vision systems comprise cameras and computers that capture and process images for tasks such as facial recognition. They need to be able to “see” objects in a wide range of lighting conditions, which demands intricate circuitry and complex algorithms. Such systems are rarely efficient enough to process a large volume of visual information in real time—unlike the human brain.

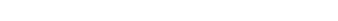

However, new bioinspired sensors developed by researchers led by Dr Chai Yang, Associate Professor, Department of Applied Physics, and Assistant Dean (Research), Faculty of Applied Science and Textiles, may offer a solution through the sensors directly adapting different light intensities instead of relying on backend computation.

Natural light intensity spans a wide range, 280 dB. The new sensors have an effective range of up to 199 dB, compared with only 70 dB for conventional silicon-based sensors.

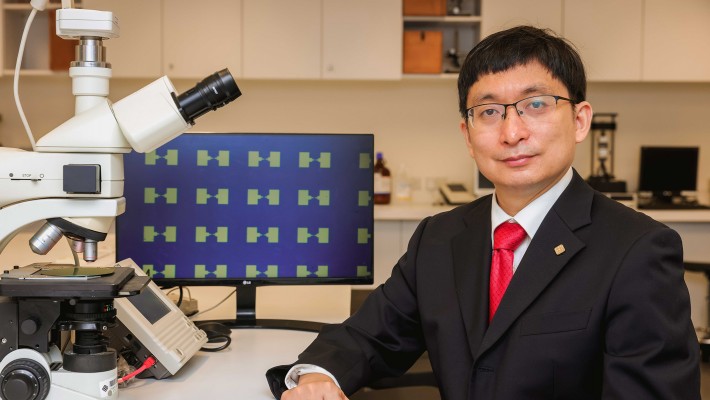

To achieve this greater range, the research team developed light detectors, called phototransistors, using a dual layer of atomic-level ultrathin molybdenum disulphide, a semiconductor with unique electrical and optical properties. The researchers then introduced “charge trap states” to the dual layer to store light information and control the device’s ability to detect light.

Each of the new vision sensors is made up of arrays of such phototransistors. They mimic the rod and cone cells of the human eye, which are respectively responsible for detecting dim and bright light. As a result, the sensors can detect objects in differently lit environments as well as switch between, and adapt to, varying levels of brightness.

Thanks to the team’s impactful research, future autonomous vehicles and facial detection cameras might have human-like vision. The research was published in Nature Electronics.